Best API Simulation Tools in 2026: a Hands-on Review

"Mock server" used to describe one kind of tool. Today it covers three distinct categories — API simulation and mocking, service virtualization, and infrastructure simulation — and the right one depends on what you're actually standing in for: a single endpoint, a stateful partner system running over gRPC or SOAP, or the cloud infrastructure underneath your service. This piece walks through the leading tools in each category, what they're built for, and how to pick.

Tools covered in this list

- WireMock Cloud — hosted API simulation and modern service virtualization with native AI integration.

- Mockoon — open-source desktop app for fast, local HTTP mocking by individual developers.

- Beeceptor — hosted, zero-setup browser-based mocking for prototyping and demos.

- Postman Mock Servers — mocking tied directly to Postman collections and documentation.

- MockServer — Java-native open-source mocking library and standalone server for JVM stacks.

- Parasoft Virtualize — long-standing enterprise service virtualization with broad protocol coverage.

- Broadcom Service Virtualization — incumbent SV platform (formerly CA LISA) for enterprise messaging stacks.

- Traffic Parrot — focused SV player aimed at IBM MQ, JMS, and enterprise messaging.

- LocalStack — local AWS emulator that runs cloud services in a single container.

- Testcontainers — throwaway Docker containers for real dependencies during integration tests.

- Moto — Python library that mocks AWS calls made through boto3 in-process.

- Prism (Stoplight) — OpenAPI-driven stub server for design-first contract validation.

- Pact — consumer-driven contract testing framework for services shipped independently.

Different types of simulation

Rather than flatten these into one ranked list, we've grouped them by what they actually do, using five questions:

- What's being simulated — a single endpoint, a partner system's behavior, or a backing service like S3 or Kafka

- Statefulness — does the tool remember what happened in the last request, or does every call stand alone

- Protocol coverage — HTTP/REST only, or also gRPC, SOAP, JMS, webhooks, and event streams

- Where it runs — a developer's laptop, a CI job, or a hosted environment shared across teams

- Team vs. solo use — Git-native workflows, role-based access, and governance, or a personal productivity tool

That gives us three primary categories plus a sidebar:

API simulation and mocking: endpoint-level stubs for developers and testers

Service virtualization: stateful, multi-protocol simulation of partner and downstream systems, historically an enterprise category

Infrastructure simulation: emulating cloud services and backing infrastructure

Spec and contract-driven tools: sidebar category, useful adjuncts rather than direct competitors

One note on overlap. WireMock Cloud spans API simulation and service virtualization, which is why it appears in both sections — the same platform handles quick endpoint stubs and stateful multi-protocol scenarios. LocalStack and Testcontainers sit in their own category because they solve a different problem: standing up cloud infrastructure locally, not simulating APIs your application calls.

API simulation and mocking — the dev-loop workhorses

These are the tools you reach for when you need a fake HTTP endpoint in front of your code — to keep CI green when a dependency is flaky, to prototype a client before the server exists, or to let ten developers work against the same contract without fighting over a shared sandbox.

WireMock Cloud

One thing to flag up front — WireMock Cloud is ours. We've tried to apply the same criteria to it as every other tool on this list, but read accordingly.

WireMock Cloud is the hosted API simulation platform built on top of the open-source WireMock project, which has been the de facto HTTP stubbing library in the JVM world for over a decade and has broad adoption outside it via its standalone server.

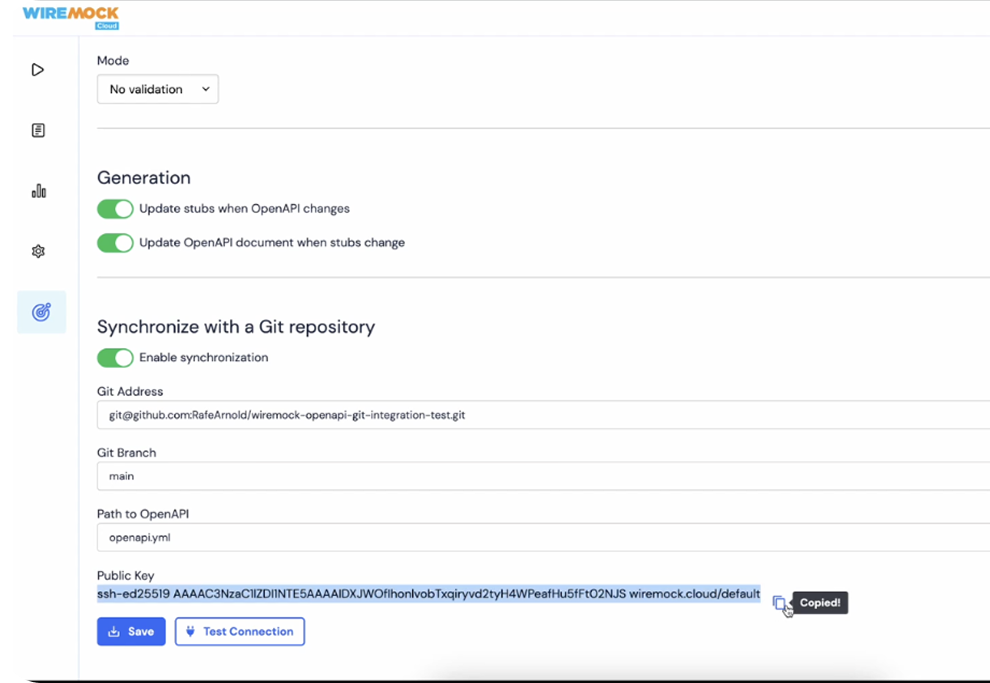

It takes the familiar mapping model and adds the pieces that individual-developer tools skip: stateful scenarios for multi-step flows, proxy-and-record to capture real traffic from a live API and replay it as a simulated response, shared workspaces with role-based access, and Git- and CI-native workflows so stubs live alongside the code that depends on them.

The result is the one tool in the category that stretches comfortably from a single engineer stubbing an endpoint on a laptop to a platform team governing simulations across dozens of services — which is why it also shows up in the service virtualization section below. If your team has outgrown a local mock file checked into a repo but doesn't want to buy a legacy SV suite, this is the obvious landing spot.

Mockoon

Open-source desktop app with a clean GUI for defining routes and responses locally. Ideal for solo developers who want a fast, zero-cloud way to stand up a mock during development. Mockoon Cloud now offers real-time team collaboration, but governance depth and CI-native workflows are lighter than dedicated hosted platforms — fine for small teams, less so once you need role-based access, audit trails, or stubs living in Git alongside service code.

Beeceptor

Hosted, zero-setup mocking aimed at quick prototyping — you get a public endpoint in seconds and can define rules from the browser. A good fit for early-stage API design work, demos, or throwaway integrations, but the feature set around stateful behavior, protocols beyond HTTP, and team workflows is lighter than what WireMock Cloud offers.

Postman Mock Servers

Mocking tied directly to Postman collections, so the barrier to entry is essentially zero if your team already documents APIs there. Convenient for Postman-centric shops; the tradeoff is that your mocks are coupled to the Postman ecosystem and its collection model, which can feel constraining once you need scenario logic or CI integration beyond what Postman's runner supports.

MockServer

Java-native open-source mocking library and standalone server, widely used in JVM-heavy environments and frequently paired with JUnit and Testcontainers (there's an official Testcontainers module). A reasonable pick if your stack is already Java and you want a library-first tool you can embed in tests; less appealing if you need a hosted control plane, a GUI, or cross-team governance.

Service virtualization for stateful, multi-protocol enterprise flows

Category 1 tools handle endpoint-level stubs well. Service virtualization (SV) targets the harder problem: simulating the behavior of a whole partner system across a multi-step flow. Think payment authorizations that depend on prior state, mainframe integrations over MQ, partner APIs that issue asynchronous webhooks, or SOAP and gRPC services buried deep in an enterprise stack. Teams need SV when the thing they're simulating has memory, speaks more than HTTP, and interacts with other systems in ways a static stub can't fake. Historically this was a category owned by a few enterprise incumbents — expensive, desktop-bound, and Git-averse. That's changed.

WireMock Cloud

WireMock Cloud brings SV out of the desktop IDE and into the workflows engineers already use. Stateful scenarios let a simulated partner remember what happened on request N when request N+1 arrives — useful for order flows, auth handshakes, and anything where a response depends on prior state. It supports gRPC and SOAP alongside REST, plus outbound webhooks for async callbacks, so simulations cover the protocols real enterprise integrations use. Specs and scenarios are stored as code, version-controlled in Git, and runnable directly from CI pipelines — no proprietary project format, no licensed desktop client, no central SV team to file tickets with. For teams modernizing off legacy SV or standing up stateful simulation for the first time, it's the pragmatic choice: the protocol coverage enterprise buyers need, delivered on a stack developers already use.

Parasoft Virtualize

Parasoft Virtualize has a long track record in large enterprises, particularly in regulated industries where SV investments were made a decade or more ago. It handles a wide range of protocols and ships as an Eclipse-based desktop IDE (standalone or plug-in) plus dedicated Virtualize Server components for shared environments. The tradeoffs are familiar to anyone who's inherited a Parasoft install: proprietary project formats, specialized skills concentrated on a few engineers, and licensing costs that tend to show up as significant annual renewals. If your team is already invested and the skills are in-house, it works. If you're evaluating fresh, the friction with Git-based and CI-driven workflows is worth weighing seriously.

Broadcom Service Virtualization (formerly CA LISA)

Broadcom SV, the product formerly known as CA LISA, is the other long-standing enterprise incumbent in the space. It covers a broad protocol set — HTTP, JMS (including TIBCO), WebSphere MQ, mainframe integrations via CICS and IMS Connect, plus REST and SOAP — and is commonly found in banks, insurers, and telcos. The constraints track Parasoft's: rigid tooling, specialized authoring skills, and total cost of ownership that tends to surprise teams doing honest math on licenses, infrastructure, and the headcount needed to maintain virtual services. It does what it does competently; whether that still matches how your teams want to work is the harder call.

Traffic Parrot

Traffic Parrot is a smaller, focused player in the SV space. Its pitch centers on protocol breadth for enterprise messaging — IBM MQ, JMS, Thrift, and file transfers, with Kafka available as part of their beta programme — in territory where the big incumbents feel heavy and the lightweight mocking tools don't reach. For teams with a specific need in that niche, it's worth a look. For broader SV across modern stacks, the surface area is narrower than the incumbents or WireMock Cloud.

Infrastructure simulation when the thing you need to fake isn't an API

The tools in this section solve a different problem from the previous two categories. Instead of standing in for a partner API or a stateful business system, they stand in for the backing services your code talks to — an S3 bucket, a Postgres instance, a Lambda invocation, an SQS queue. That's a separate job from API simulation, and most real stacks end up needing both: a simulator for the third-party APIs your service integrates with, and an infrastructure simulator for the cloud plumbing underneath. WireMock Cloud sits in the first bucket; the tools below sit in the second, and we'd happily see them running side by side.

LocalStack

The leading option for emulating AWS locally. LocalStack runs as a single container that stubs out the core AWS services — S3, SQS, SNS, DynamoDB, Lambda, Kinesis, IAM — in its free Community tier, with dozens more services and advanced features (RDS, EKS, Cognito, persistence, and others) behind the paid plans. The developer-experience win is real: faster feedback, no shared-account contention, no surprise bill when a misconfigured test loop runs overnight. It's the tool most AWS-heavy teams we talk to already have in their stack, and deservedly so. Worth being clear-eyed about the tradeoffs — emulation fidelity varies by service, and some of the more advanced or recently launched AWS features sit behind the paid tiers — but as a category leader for local AWS development, it's hard to argue with.

Testcontainers

Strictly speaking, Testcontainers isn't simulation at all. It spins up real dependencies — Postgres, Kafka, Redis, Elasticsearch, and hundreds of other images from the Testcontainers module catalog — in throwaway Docker containers that live only for the duration of your test. We're including it here because teams reach for it to solve the same problem: "I need a predictable backing service my tests can rely on." Running the real thing means you sidestep emulation-fidelity questions entirely; the cost is slower test startup, a Docker dependency on every CI runner, and more resource pressure than a lightweight stub. For integration tests against databases and message brokers in particular, many teams prefer real-over-fake, and Testcontainers is the cleanest way to get there.

Moto

A Python library that mocks AWS service calls made through boto3, typically via a decorator or context manager on individual tests. Lighter and more surgical than LocalStack: Moto lives inside the test process rather than running as a separate container, which makes it fast and trivial to wire into existing pytest suites. Coverage spans the AWS services Python developers most commonly touch — S3, DynamoDB, SQS, SNS, IAM, and a long tail of others — and it's especially popular for unit-testing serverless code. One caveat: IAM authorization simulation in Moto is intentionally basic, so don't lean on it for policy-enforcement tests. If you're in the Python/boto3 world and don't need a full local AWS environment, Moto is usually the lower-friction choice; if you need cross-language emulation or are testing code that shells out to the AWS CLI, LocalStack is the better fit.

Spec and contract-driven tools solve an adjacent problem, not simulation

These two tools come up constantly in API simulation buying conversations, but neither is really an API simulator. Prism turns an OpenAPI spec into a running stub server — useful for design-first validation, not for modeling stateful partner behavior. Pact is a contract testing framework, not a mock at all, though its stub-interaction model looks superficially similar. Worth knowing where they fit so you don't buy one expecting the other.

Prism (Stoplight)

Prism reads an OpenAPI or Swagger document and serves responses derived from the spec. If your team is writing specs before implementations exist — the "design-first" workflow — Prism gives frontend and downstream consumers something to hit while the real service is being built. By default it returns a declared example when one exists and generates a static response from the schema when it doesn't, with an opt-in dynamic mode that uses Faker.js to produce randomized, schema-conformant responses on every request.

The tradeoff is scope. Prism is stateless and tied to what the spec describes, which means stateful flows, conditional logic, sequenced scenarios, and realistic failure modes are out of scope compared to dedicated simulation tools. It's a good fit for contract validation during design, not for testing how your service behaves when a downstream partner returns a 503 mid-transaction. Teams often pair it with a simulation tool that handles the messier cases.

Pact

Pact does consumer-driven contract testing. Consumers declare the interactions they expect — "when I call GET /orders/123, I need a response shaped like this" — and those expectations become a contract the producer verifies against its real implementation. The consumer side uses stub interactions during its own tests, which is why Pact gets lumped in with mocking tools. The problem Pact solves is different, though: it's about preventing integration drift between teams shipping independently, not about simulating a system you can't access.

If your pain is "we keep breaking each other's services on deploy," Pact is the right category. If your pain is "we can't test because the partner sandbox is down or doesn't support our scenarios," it isn't. The two coexist cleanly — WireMock Cloud runs alongside Pact workflows via documented integrations (including PactFlow's bi-directional contract testing and a wiremock-pact-generator library), handling scenario-level simulation while Pact enforces contract compatibility across teams.

Simulation is now table stakes for AI-driven development

The category has a second buyer now: the AI coding agent. Claude Code, Cursor, Copilot and the rest are writing real code against real dependencies, and the same reasons humans need simulated environments apply double to them — with a few new ones layered on top.

Two things are worth separating. First, why agents need simulation in the first place. Second, which tools actually meet agents where they work — through MCP, IDE plugins, or skills the agent can invoke directly.

Why agents need simulated environments

You don't want a coding agent hitting your production payment API to figure out the response shape. You don't want it spinning up real AWS resources on your account during an exploratory test loop. And you definitely don't want it calling a partner sandbox a thousand times to converge on a working integration. Simulation gives the agent a safe, controllable target to iterate against — the same argument as for human developers, just with the iteration count cranked up an order of magnitude.

There's also a less obvious reason, specific to evaluating agent behavior. LLM-powered agents are non-deterministic: the same prompt and tools can produce different behavior across runs, so a single passing test tells you almost nothing. Meaningful evaluation means running the agent hundreds or thousands of times against a fixed environment and looking at the failure distribution. That's only possible if API responses are locked down — otherwise environmental drift contaminates every benchmark. Stateful simulation also matters more for agents than for traditional tests: an agent that books a hotel room needs the simulated availability API to remember, on the next call, that the room is now booked. WireMock's piece on why AI agents need simulated environments goes deeper on both points.

Where each tool lands on AI

Coverage varies a lot. Most of the tools in this article were designed before agentic coding was a real workflow, and it shows.

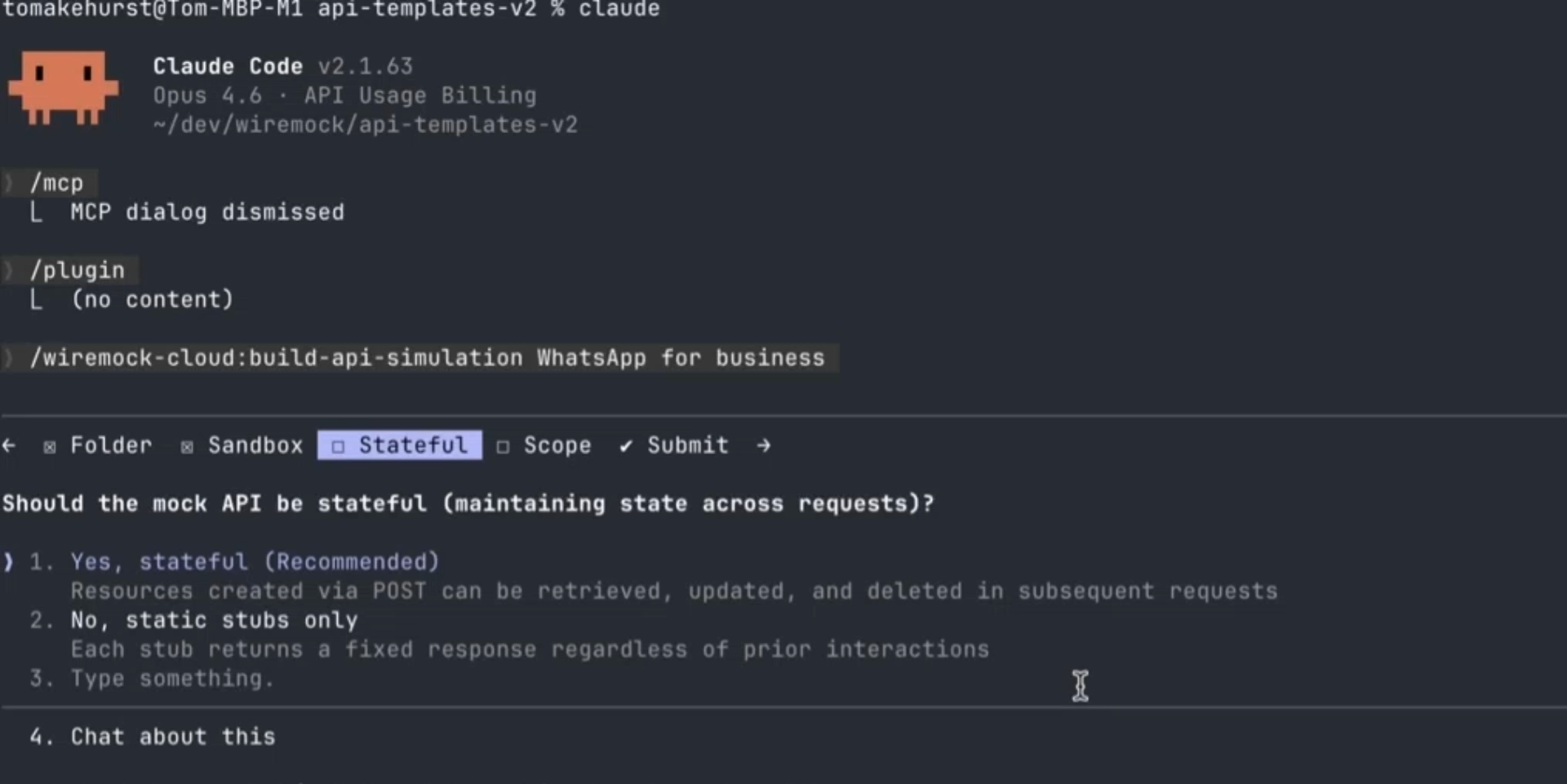

- WireMock Cloud is the one platform in this roundup built explicitly for AI-assisted and AI-led development. Its native MCP server connects coding agents directly to the simulation layer, so an agent can generate stubs, run code against them, read machine-readable diagnostics on what failed, and iterate — without a human in the loop. On top of that, WireMock's recently launched Agent Skills give Claude Code, Cursor, Copilot, and any other skills-aware agent a set of WireMock-specific workflows to invoke: building a full simulation from an OpenAPI spec, generating new stubs for an existing mock API, converting basic stubs into stateful or data-driven ones, validating and fixing stubs against a schema, and authoring response templates. Where MCP gives the agent the ability to call WireMock Cloud, skills give it the expertise to use it well. WireMock AI also generates simulations from natural-language prompts, recorded traffic, or specs — useful when a developer wants to bootstrap a simulation in a single step rather than hand-crafting stubs.

- LocalStack is the AI-relevant option in infrastructure simulation. Agents writing AWS code need somewhere to run that isn't a real account, and LocalStack is the obvious target. It also ships an MCP server so agents can interact with the local cloud directly. Coverage limits in the free tier still apply — the agent can only exercise what LocalStack actually emulates.

- Testcontainers doesn't have a dedicated AI story, but it benefits from one indirectly: agents can spin up real Postgres, Kafka, or Redis instances in throwaway containers without permission to touch shared infrastructure. The Docker requirement is the friction point; on a developer's laptop that's fine, in a sandboxed agent environment it's something to plan for.

- Mockoon, Beeceptor, Postman, MockServer, Parasoft, Broadcom, Traffic Parrot, Prism, Pact, Moto — none of these have meaningful native integration with coding agents at the time of writing. Some are usable from an agent in the trivial sense that any HTTP API is — the agent can read the docs and call the REST endpoints — but the agent-shaped pieces (an MCP server, a skill, codebase-aware stub generation, machine-readable diagnostics in a feedback loop) aren't there. For teams whose simulation strategy assumes agents are part of the workflow, that gap will matter more over the next year, not less.

The deeper point, well made in WireMock's piece on simulation vs. mocking vs. virtualization, is that the terminology debate is mostly noise — what teams actually want is dynamic, stateful, CI-native simulation they can drive from code. Agents want the same thing, just with an MCP endpoint and a set of skills attached.

Match the tool to what you're actually simulating

The categories above aren't interchangeable, and picking the wrong one is how teams end up six months into a rollout realizing their "mock server" can't model a stateful partner flow — or that their service virtualization platform is massive overkill for what was really an endpoint-stubbing problem. A quick way to orient yourself:

- Endpoint-level stubs for the inner dev loop and CI stability. You need fast, reliable responses to specific HTTP requests so local development and pipelines stop depending on someone else's uptime. This is API simulation and mocking territory — WireMock Cloud, Mockoon, Beeceptor, Postman mocks, MockServer.

- Stateful partner systems, enterprise integrations, or complex protocol flows. You're modeling a payment processor, a mainframe, a SOAP or gRPC backend, or a partner API with sessions and multi-step scenarios. This is service virtualization — and the practical choice today is modern SV like WireMock Cloud, not the legacy incumbents that still demand desktop IDEs and six-figure renewals.

- Cloud infrastructure — queues, buckets, Lambda, DynamoDB, local Kafka. You're not mocking an API, you're standing in for AWS or a backing service. Reach for infrastructure simulation: LocalStack, Testcontainers, Moto.

- Design-first contract validation against an OpenAPI spec. Prism and Pact live here. They usually sit alongside your simulation layer, not in place of it — Prism for spec-driven stubs, Pact for consumer-driven contracts between services you own.

Most real stacks run two or three of these together. A typical setup: Testcontainers for the database and queue, WireMock Cloud for third-party APIs and the stateful partner integrations, Pact for internal service contracts. Treat the categories as complementary, not competitive.

Try WireMock Cloud

If API simulation or modern service virtualization is what you actually need, WireMock Cloud is the category leader on both — stateful scenarios, gRPC and SOAP, proxy-and-record, team workflows, and a CI story that doesn't require a dedicated SV team to keep running.

/

Latest posts

Have More Questions?

.svg)

.svg)

.png)

.png)