The Future of Test Environments for Agentic Coding: Expert Panel Recap

.png)

Key takeaways from our recent CTO Panel with LocalStack, StrongDM, and Docker

Pre-production environments have long been a bottleneck in software delivery, but that pressure is mounting – driven by agents producing code at unprecedented speed, and organisations accelerating the production of agentic systems. To make sense of the noise, Tom Akehurst, CTO of WireMock hosted an expert panel with guests: Michael Irwin, Principal Engineer at Docker; Justin McCarthy, co-founder and CTO of StrongDM; and Waldemar Hummer, CTO and co-founder of LocalStack.

Their conversation covered how environments need to evolve, what simulation and fidelity mean in this new context, how their companies are applying these ideas in practice, and which human skills will remain indispensable as automation accelerates.

(You can watch the full discussion here, and we've also included a few relevant snippets below)

Conceptualising environments in the agentic era

The panellists agreed that the traditional model (building and running tests against a full-scale production replica, before shipping if green) is no longer sufficient. However, their answers differed regarding the extent of reinvention needed.

- Waldemar (LocalStack) pointed to “a widening testing and validation gap”, observing that "The percentage of code being generated to what actually hits production is lowering and lowering." The implication is that robust environments are more critical than ever; without effective ways to test AI-generated code at scale, organisations risk flooding their pipelines with “slop.”

- Michael (Docker) drew a parallel with the DevOps movement of the previous decade. When teams began to accelerate deployment, bottlenecks simply shifted from release schedules to test reliability and infrastructure complexity. But while the container revolution addressed some of those issues, the agentic era may have a partial solution inbuilt: "The AI that we have today is the dumbest it's going to be going forward," Irwin noted. "It's only going to continue to get better."

- For Justing (StrongDM), environments are “just one component of a broader validation problem: how do you know your validation is right? How do you know the thing that generated the validation is right?” He stresses that more emphasis must be placed on how data is generated and amplified to flow through environments, and how actions within the system are observed – the point is that environments serve validation, not the other way around.

Redefining simulation and fidelity

The panel also broadly agreed that the requirement to make the test environment as close to production as possible is giving way to a more deliberate approach: which elements need to be realistic, to what degree, and for what purpose?

- Waldemar (LocalStack) described how experimentation at scale is now happening in ways previously deemed impossible, citing examples of teams running parallel versions of a software stack to measure the impact of changes across the full codebase. "It's almost like being able to create parallel universes of your existing software stack and then experimenting," Waldemar explained. “I can change something here or there, and then see what the impact and outcome is going to be of a change across the codebase and the app.”

- Michael (Docker) made the point that AI tools perform best when they operate within well-defined architectural boundaries. Teams with clear service interfaces and testable contracts find it much easier to integrate coding agents into their workflows. "If it's hard for a human to interpret, how are we going to provide the context that linguistic agents are going to understand?" Irwin argued. In this sense, the shift toward agentic development is reinforcing long-standing architectural best practices, but with a stronger incentive to follow through

- Tom (WireMock) noted that the knowledge developers hold about what constitutes a trustworthy test is something the industry has never been skilled at articulating. “This thing about levels of fidelity in an environment – that's a kind of tacit understanding that developers have at the moment. But codifying that by saying, "I can hand this off to a machine and reliably have it automate" – that's still a problem with some way to go”.

Simulation in the real world

When each panellist shared how their organisation is applying these ideas in practice, a common theme emerged: their companies are all building some form of feedback loop designed to progressively reduce the need for human intervention.

- Michael (LocalStack) described a culture of experimentation at Docker, including Slack channels dedicated to sharing emerging agentic best practices, pull requests increasingly authored by AI, and product managers submitting code for the first time. The rapid prototyping that agents enable has broadened participation in development across the organisation.

- LocalStack is rolling out Claude Code across their organisation and seeing acceleration in API coverage for theirAWS emulator. Waldemar drew a line between what's changing and what isn't. "Code is becoming increasingly cheap, almost asymptotically free," he argued. "But we still need software that has a level of ownership where somebody needs to write the specs, somebody needs to have an overview of the entire complexity of that whole system."

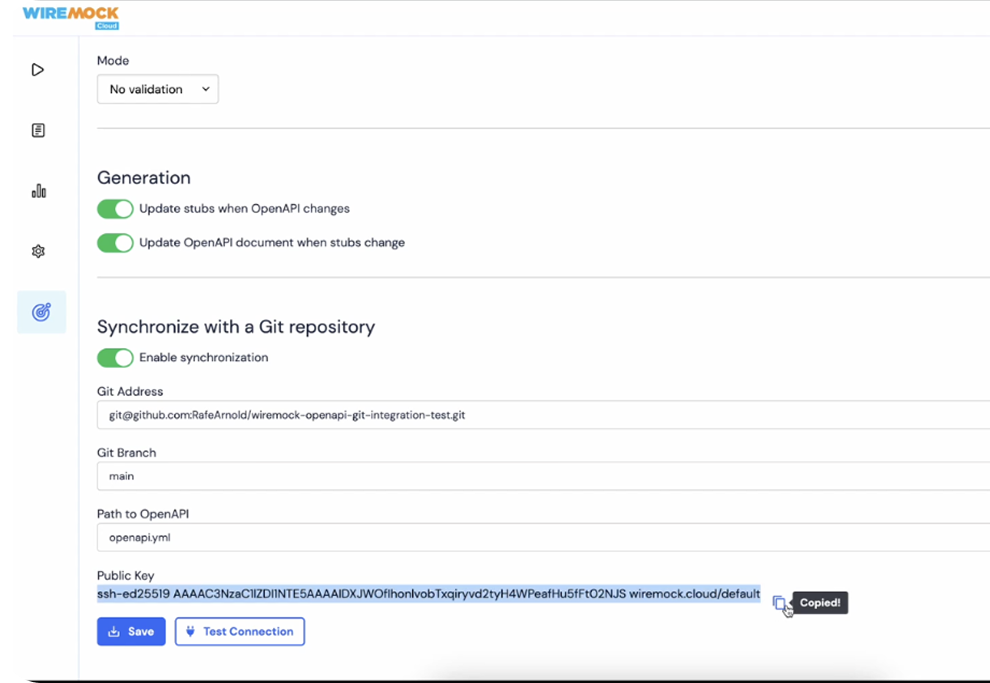

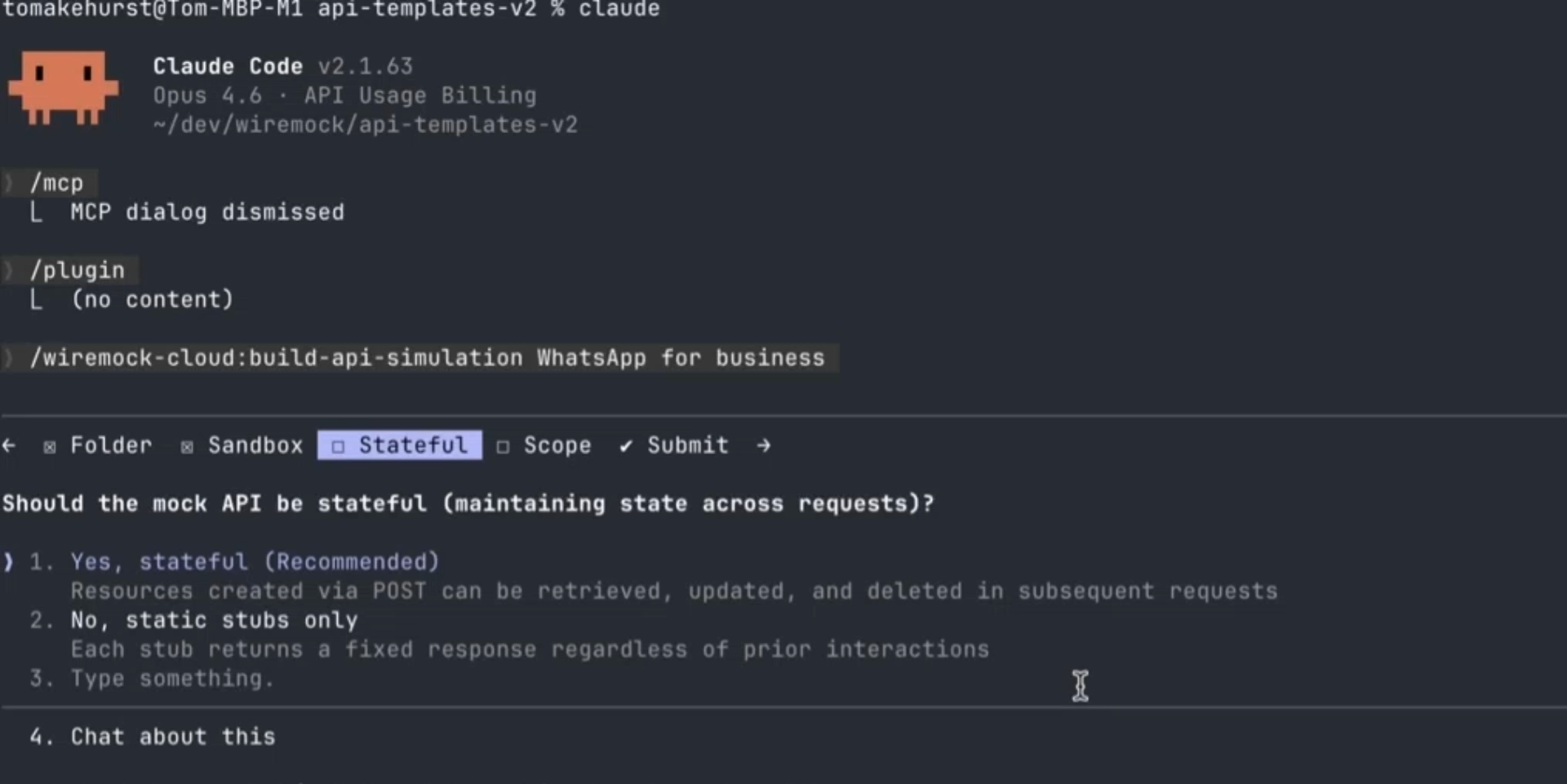

- Tom described WireMock's approach to using AI for building API simulations. By pointing agents at OpenAPI specifications and other documentation, the team can generate simulations of third-party APIs with the spec itself serving as a guardrail for validity. When the agent gets something wrong, it writes a summary of what failed, and that summary is folded back into the skill text to improve future attempts – a progressive feedback loop aimed at eventual one-shot accuracy

- Justin explained StrongDM's workflow as biphasal – an initial interactive stage is followed by an autonomous period. First, engineers use tools like Claude Code in a REPL to build up “a tranche of intent,” which is then sent to an autonomous coding agent that grinds through implementation over hours or days without human involvement.

Keeping the edge: what human skills will become indispensable

The panel closed with a question that has been on many developers’ minds of late: what becomes a human superpower when agents handle the technical work?

Waldemar identified curiosity, product thinking, and courage as key qualities. “Curiosity to challenge assumptions and explore what's now possible; product thinking to shift focus from engineering inputs to business outcomes; and courage to simply start” – to initiate the experiment, instruct the agent, and iterate from there.

Michael pointed to empathy. Agents can write and validate code, but someone still needs to translate what customers actually need into intent, and decide what's worth building. That human layer of interpretation, he argued, is not something that agents will replicate any time soon.

Justin’s answer was desire. "I wake up every day and I want something to happen. I want something to progress – and the models don't. They're passive, but you can recruit them to your desire." Models might be recruited to brainstorm, code, and validate – but without that initiating impulse, they sit idle. It’s up to the developers to put them to work.

/

Latest posts

Have More Questions?

.svg)

.svg)

.png)