Field notes from the AI agent conference

I went to the AI Agent conference in New York a couple of weeks ago, and I expected a lot of AI stuff. 100x this and that, engineering revolution, all those kinds of things.

There was some of that, but less than I feared thankfully. The bigger theme was more practical: teams are trying to figure out how agents can work safely inside real companies. I think this is a great evolution in the AI conversation after several years of being less grounded.

The hard parts people kept coming back to were access, permissions, observability, security, cost, developer enablement, and workflow change.

#1 - Agents need controlled places to work

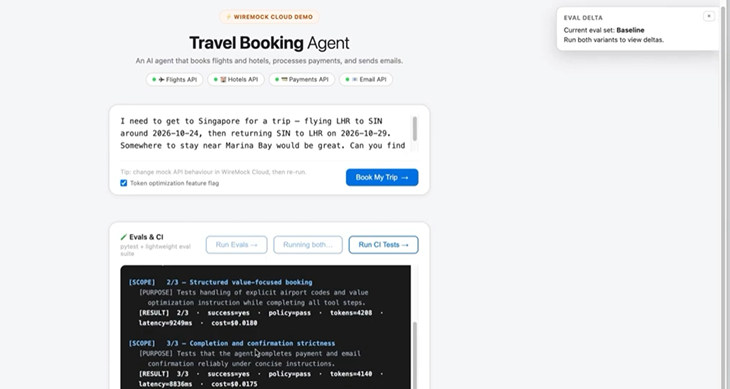

Starting off selfishly with the part that's great for us at WireMock Cloud, we heard again and again a theme that has been bubbling up organically in our calls with prospective customers more recently: agents need to integrate with systems, but giving agents (especially early on in dev) access to actual APIs makes teams pretty nervous.

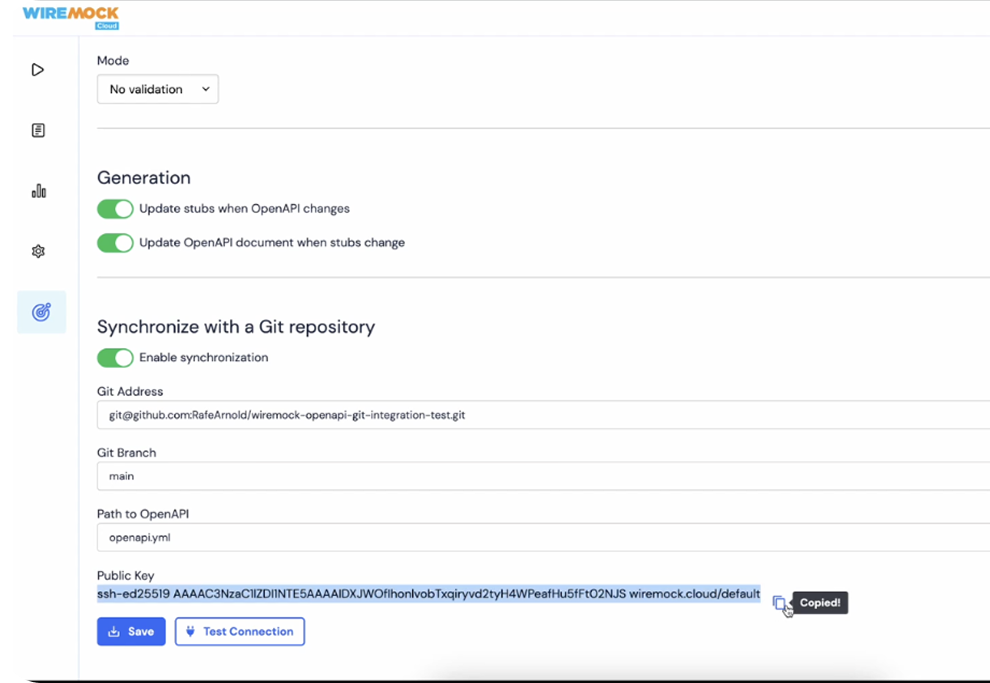

That is exactly why API simulation (what we do!) is becoming more relevant in the agent conversation. Agents need environments where they can try things, fail safely, and produce work that teams can inspect. Realistic, stateful simulated APIs that let you build your agents on top of your real world APIs without requiring real-world access is the solution to a problem that teams are growing into as agent dev becomes more widespread.

#2 - Access is still a blocker

Access came up constantly. Not just data access. Tool access, API access, permissions, and whether developers are actually unblocked enough to use agentic tools well.

An agent is only useful if it can see the right context and take the right actions. If it cannot access the systems around the work, it will be limited. If it has too much access without strong controls, it becomes a governance problem.

That balance is one of the big practical questions right now.

#3 - Security, cost, and observability are moving up the list

The security and cost conversations were packed, which says a lot about where companies are in the adoption curve.

People are no longer only asking whether agents can do impressive things. They are asking what happens when agents are used across teams.

- Who can use them?

- What can they access?

- How do we know what they did?

- How much does it cost? How do we prevent runaway usage?

Observability also came up in a useful way. Datadog made the point that agents break in weird ways, so observability needs to show up earlier.

That feels right. With agents, failures can come from the prompt, context, tool call, API response, permissions, model behavior, or some mix of all of it. Teams need visibility into what the agent saw, what it tried, and where things changed.

Fairly recently, teams just had mandates to do anything with AI, as fast as possible. Now it’s getting looked over the way actual product and engineering systems do, and that’s a good thing.

#4 - API catalogs are starting to look like agent infrastructure

Apollo GraphQL had a smart framing around API catalogs: agents need to know what exists and how to use it.

That point came up elsewhere too, including from American Airlines. If agents are going to work across internal systems, they need a map of the environment.

What APIs exist? What do they do? Who owns them? How should they be called? What constraints apply?

The better documented and discoverable your systems are, the more useful agents can be on top of them. Existing API infrastructure gets more important, not less.

(It doesn’t hurt that we here at WireMock Cloud) also provide API context to agents.)

#5 - Developer enablement may matter more than raw capability

A few people made the point that developer enablement is a bigger blocker than the technology itself. That tracked with the rest of the conference. It is one thing for a motivated developer to get value from an AI tool. It is another thing for a company to turn that into a repeatable way of working.

That takes onboarding, training, permissions, examples, and patterns teams can reuse.

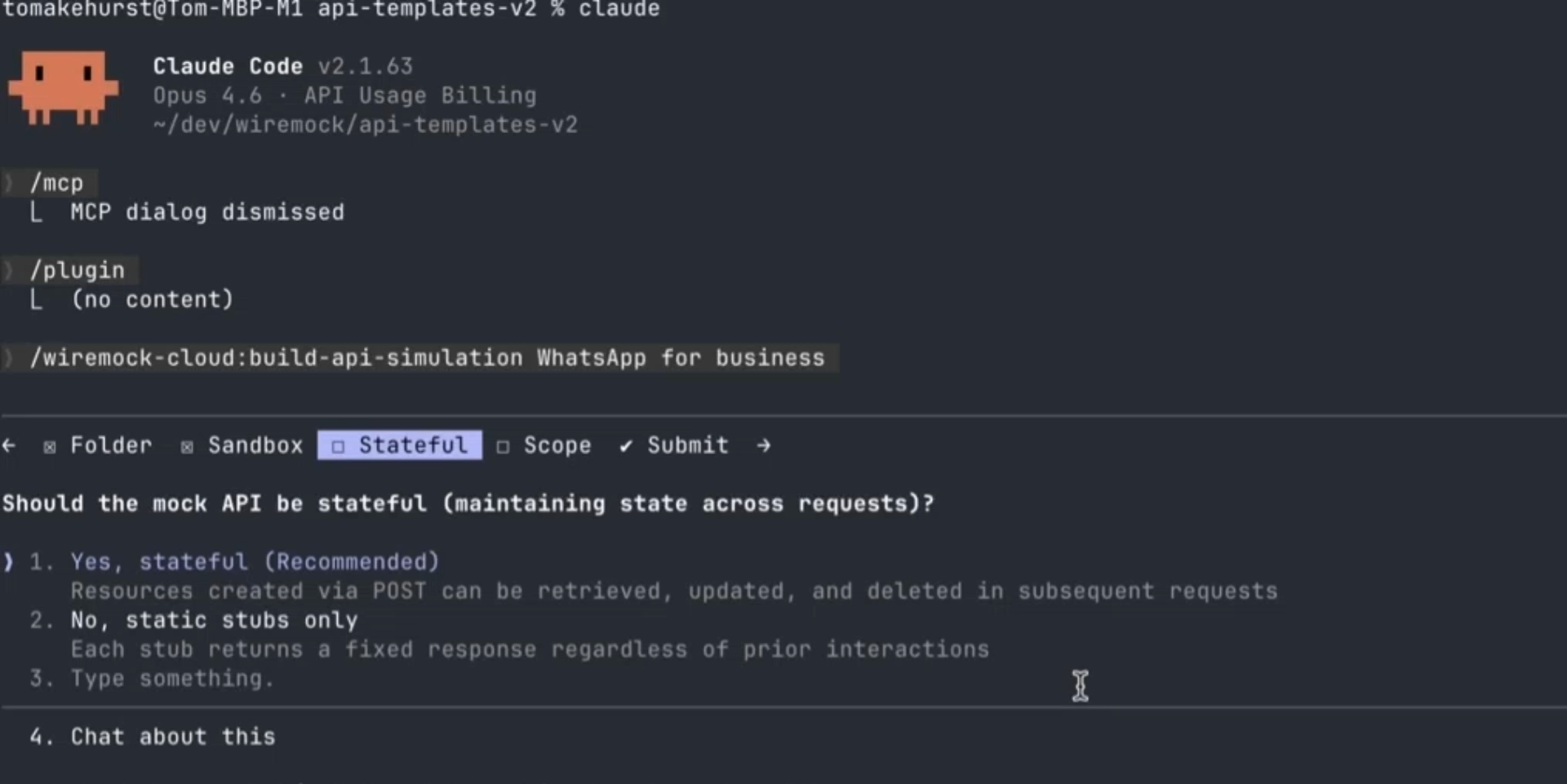

The OpenAI Codex Engineer discussion around skills and plugins was interesting here. The idea was that teams can bake best practices into the development environment instead of relying on every developer to remember every step.

That feels like the right direction. The best agent workflows probably will not depend on everyone becoming a prompt expert. They will come from making the right behavior easier to repeat.

#6 - Structured work may be the best starting point

One comment that stuck with me was that AI may be more ready for compliance work and structured evaluation than for a lot of open-ended consumer experiences.

That makes sense. Agents tend to do better when the work has clear inputs, clear rules, and clear success criteria.

Compliance checks, API validation, structured reviews, documentation checks, and internal workflow automation may not be the flashiest use cases, but they are bounded. Bounded work is easier to trust.

That is probably a useful lens for teams deciding where to start.

#7 - The SDLC is still early

There was a lot of energy around code generation, but less evidence that teams are deeply automating later software delivery steps like review and testing.

The pattern I heard a few times was: first code generation, then code review, then testing.

That makes sense. Code generation keeps the developer close to the output. Review and testing require more trust, more context, and better controls.

The bigger opportunity is not just producing more code faster. It is helping teams verify, test, and safely release changes.

The main shift

My biggest takeaway is that the agent conversation is getting more practical. Finally!

People are still (overly) excited about what agents can do, but the useful discussions were about everything around the agent: access, context, observability, security, cost, permissions, API catalogs, developer experience, and controlled environments.

That is a healthy shift.

The industry is moving from “look what agents can do” to “how do we make this work inside our systems?”

/

Latest posts

Have More Questions?

.svg)

.svg)

.png)