You Need An API Clean Room for Building AI Agents

Most companies now have at least one AI agent project getting started somewhere.

Some are customer-facing. Some are internal. Some are developer tools. Some are still experiments. But once a team starts building an agent that needs to do real work, the same question comes up quickly:

_What should this thing be allowed to touch while we are still building it?_

Agents need APIs to become useful. They need realistic responses, error states, schemas, and integration paths. But giving an unfinished agent direct access to internal or external APIs creates risk: bad test data, side effects, rate limits, unstable dependencies, and output that cannot be trusted.

That sounds like a new AI problem. It is really an old API dependency problem made bigger.

Teams were already dealing with unavailable services, fragile test environments, expensive third-party APIs, and blocked integration work. Agents just hit those problems faster, more often, and at a greater scale.

That is why clean rooms for API integration and testing are becoming critical.

The API dependency problem was already there

Long before agents, developers were blocked across the SDLC by APIs.

A service was not ready yet. A test environment was down. A third-party API was too expensive to call repeatedly, a third-party sandbox was flaky A production system was too risky to touch. A dependent team was still finishing its part. A load test failed because a vendor or platform API wasn’t ready for the traffic.

Teams found ways around this, usually pretty inefficiently. They waited, copied sample responses into tests, or used shared environments that were “good enough” until they were not. Some even found WireMock Cloud and realized the problem actually was solvable, because API simulation frees teams from the burden of API dependencies in dev and test.

With everyone rushing to build agents, though, teams are finding these problems harder to ignore than ever before.

Agents amplify weak API environments

A human developer might run into a blocked dependency a few times a day, and they can usually find ways to work around the problem and keep going.

An agent can run into it constantly, and will not find a solution. It will just give you bad results - usually confidently.

If an API is unavailable, the agent stalls. If responses are inconsistent, the agent may generate bad code. If the environment is flaky, the agent may learn the wrong thing. If the API has side effects, the agent may create cleanup work nobody wanted.

So “just connect it to the real API” is not always a good early development strategy.

You want realism. But you also need control.

Real APIs are risky too early

It is tempting to give an agent access to real internal or external APIs right away.

That is understandable. Real APIs give real behavior. They expose real edge cases. They show whether the workflow holds up.

But early access can create problems quickly. An unfinished agent may call APIs in the wrong sequence, create bad data, trigger side effects, hit rate limits, or depend on unstable services, or do some destructive behavior you hadn’t anticipated for no reason other than it’s AI and AI likes to do that.

With external APIs, you also also have cost, throttling, and vendor constraints to think about.

This does not mean agents should never touch real APIs. It means they need a safer path before they get there. Build on top of simulated APIs (Clean room), cut over to the live APIs at the right stage of maturity.

Access is the hard part

A lot of agent development comes back to access.

What can the agent see? What can it call? What data can it use? What actions can it take? How do we know what it did?

Give an agent too little access and it cannot do anything useful. Give it too much and the risk gets uncomfortable fast.

That middle ground is where API clean rooms become useful. Agents can work with simulated or virtualized APIs that behave like real services, but inside an environment built for development and testing.

You are not giving the agent the keys to everything. You are giving it a realistic place to work, with boundaries.

That gives teams enough realism to make progress, enough isolation to reduce risk, and enough visibility to understand what happened when the agent does something strange.

Clean rooms make AI development safer

This is probably the most important part.

A lot of teams want the productivity gains from AI, but they do not want to hand an unfinished agent direct access to sensitive systems, production data, vendor APIs, or fragile shared environments.

That is a reasonable instinct.

A clean room gives the agent a bounded place to work. It can call APIs, inspect responses, handle errors, and test integration paths without touching systems it should not be touching yet.

The agent can try things. It can fail. It can generate code against known behavior. And when something goes wrong, the blast radius is smaller.

That is what makes AI development safer. Not because the agent suddenly becomes perfect, but because the environment is designed for imperfection.

And that safety helps teams move faster. If every agent project needs a long production access debate before it can do anything useful, adoption slows down. If teams can start in a controlled API environment, they can learn faster and with more confidence.

Where WireMock Cloud fits

We have been hearing this more from prospects building AI and agent capabilities.

They want agents to test integrations, generate code, and validate behavior without giving them direct access to every internal or external system. They need the agent to work with realistic API behavior, but they also need boundaries.

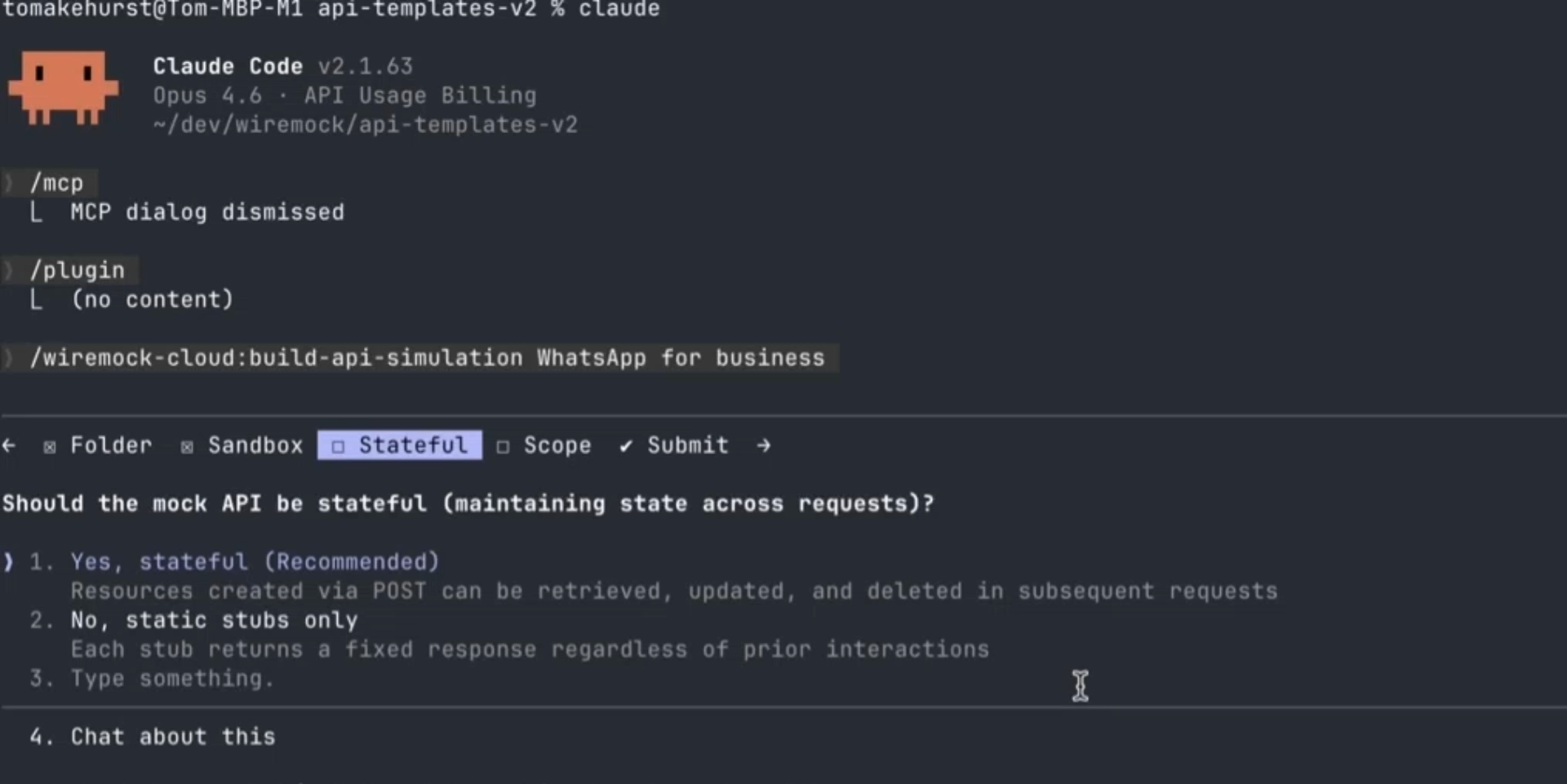

That is exactly the space WireMock Cloud is built for.

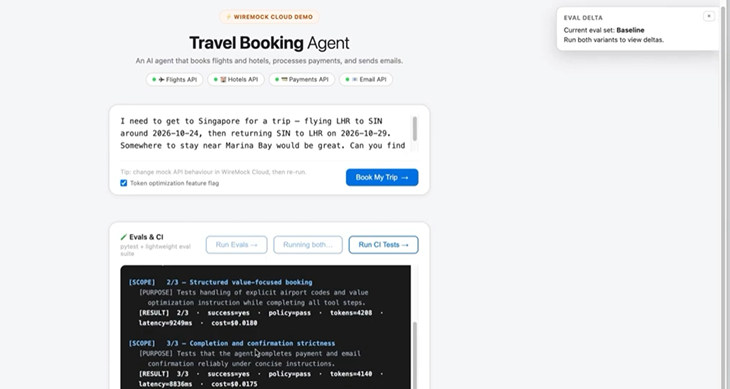

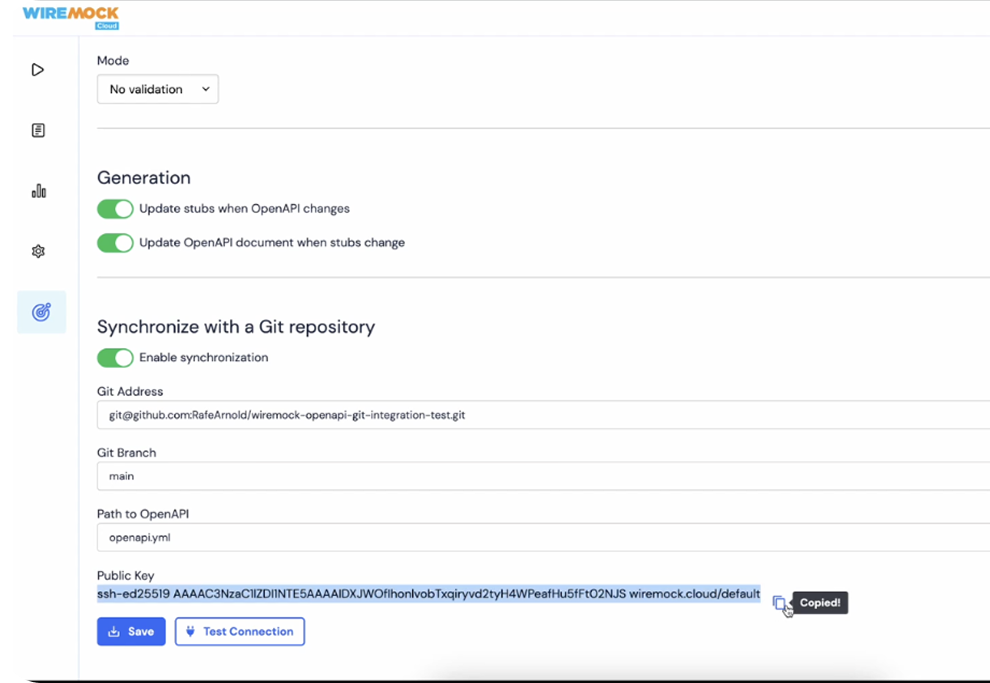

WireMock Cloud provides clean rooms for API integrations and testing. Teams can simulate real API behavior, isolate dependencies, and give developers or agents a controlled environment to work in.

For human developers, that removes blockers across the SDLC.

For agents, it creates a safer operating environment where they can do useful work without creating unnecessary risk.

The ROI is not just faster code

The ROI story is not only “agents write code faster.”

That is the obvious pitch, but it is too narrow.

The bigger opportunity is removing friction from integration work: waiting for dependent services, waiting for test environments, waiting for third-party access, waiting for stable data, or waiting for another team to reproduce an issue.

Those delays already cost teams time. Agents can make the cost of those delays more visible, because they run into the same blockers faster and more often.

Clean API environments help teams build earlier, test more reliably, reproduce failures faster, and avoid unnecessary calls to paid or rate-limited APIs.

You are not just speeding up one task. You are making the development loop less blocked.

That is where the ROI starts to show up.

The goal is controlled realism

A clean room should not be a toy sandbox.

If mock behavior is too shallow, the agent may generate code that works against a happy-path response but fails against the real API.

That is not useful. It just moves the problem later.

The goal is controlled realism. Good API simulation should reflect real service behavior, including error cases, edge cases, authentication patterns, and the kinds of responses teams actually see.

That gives agents enough reality to do meaningful work, without giving them unlimited access too soon.

The shift

AI agents are forcing teams to look again at problems they already had: API dependencies, test instability, access control, integration safety, observability, cost, and repeatability.

These were already hard. Agents just make them harder to ignore.

The teams that get value from agent development will not be the ones that connect agents to everything and hope for the best. They will be the ones that give agents safe, realistic places to work, then expand access as the workflow proves itself.

That is why clean rooms matter.

They make AI development safer, more practical, and easier to scale.

/

Latest posts

Have More Questions?

.svg)

.svg)

.png)